Best practices and troubleshooting

Get the most out of the Cognite Power BI connector with these best practices and troubleshooting tips.

Performance best practices

The performance of the Cognite Power BI connector depends on the Cognite Data Fusion (CDF) resource types you access. For example, reading 1M data points takes around 2:30 to 3:00 minutes (6K data points per second). Each full request takes an average of 120 ms, and the connector adds an average of 20 ms to CDF requests.

Follow these general best practices to make sure you get the best and most reliable performance:

- Don't use

ORexpressions or expanding tables. - Use multiple queries when possible.

- Use incremental refresh.

- Partition data sets if possible.

- Keep only the data you need. Remove unnecessary columns and data.

- Keep historical data in a separate report if you don't need the data daily. Refresh the historical data report when you need the data.

- Reduce the number of calculations/operations in the front end, and try to do as much as possible in the data modeling.

Write performant queries

The OData service accepts multiple concurrent requests and processes the requests in parallel. Power BI also dispatches multiple queries concurrently when possible.

Therefore, it's better to compose and use multiple queries instead of a single complex query with, for example, OR expressions or expands. A single complex query must be iterated sequentially with the added round-trip latency for each request.

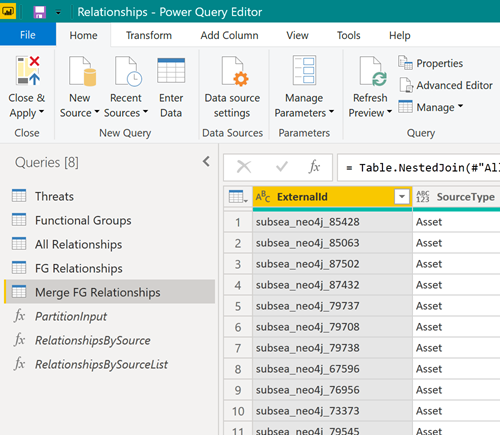

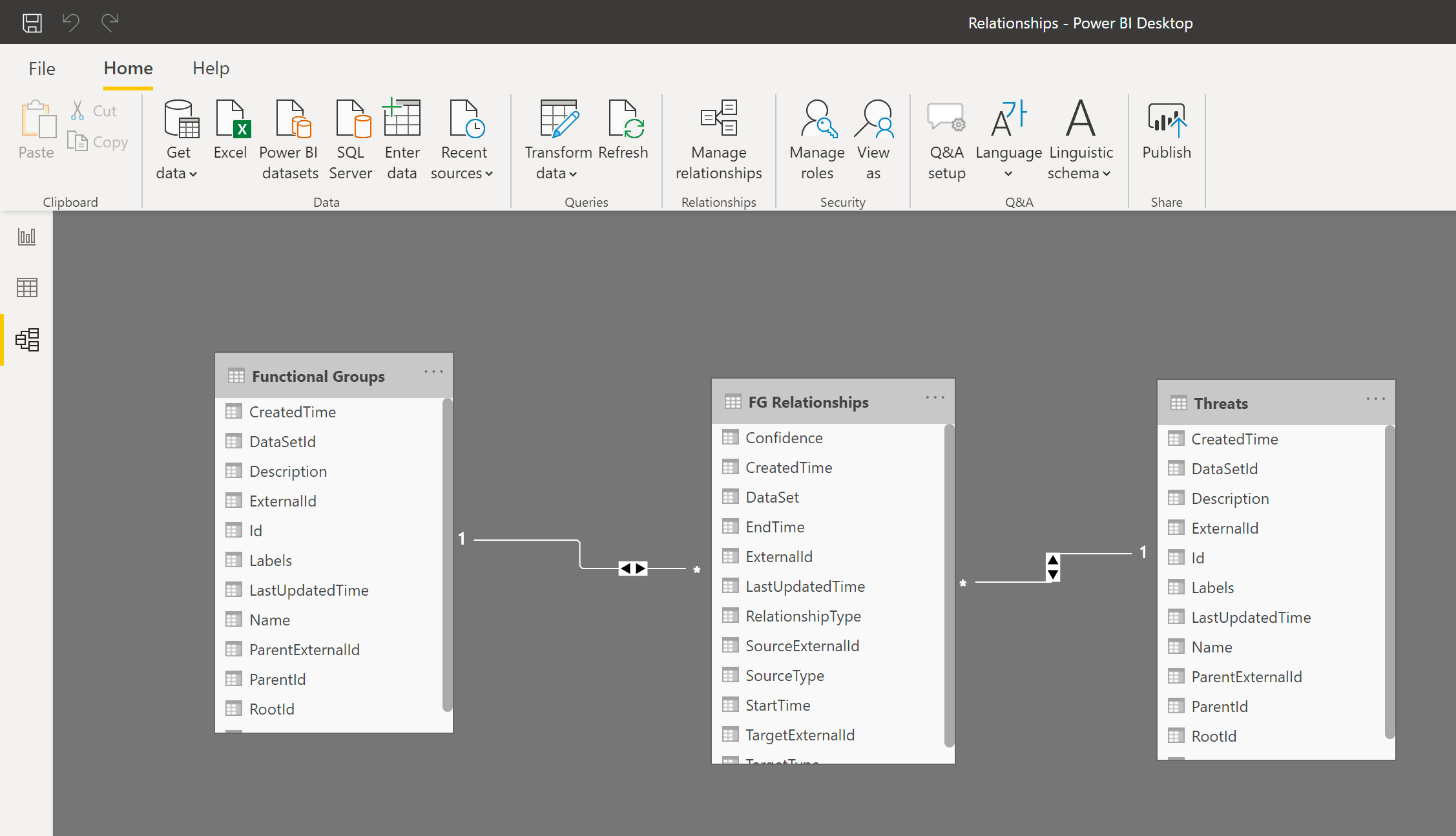

Instead, try to download the data using multiple queries:

Next, join the resulting tables in the Power BI data model to work with the tables as if they were a single table:

Use incremental refresh

Incremental refresh enables large datasets in Power BI with the following benefits:

- Only data that has changed needs to be refreshed.

- You don't have to maintain long-running connections to source systems.

- Less data to refresh reduces the overall consumption of memory and other resources.

Learn more about incremental refresh.

Partition large data sets

If you need to download large data sets, try to partition the data set and have a separate query to read each partition. Power BI processes multiple queries concurrently, and partitioning the data set can significantly improve the performance.

For example, if you read data points from the last two years, try splitting the query into two queries, each reading one year of data. Then merge (concatenate) the tables in Power BI.

Clear the cache

Power BI caches the service manifest that describes the schema of the OData service. When the connector or OData service is upgraded, you may need to clear the Power BI cache to force Power BI to re-read the service manifest.

To clear the cache:

-

In

Power BI Desktop, select File > Options & Settings > Options > Data Load. -

Under Data Cache Management Options, select Clear Cache.

Property naming in metadata and CDF RAW

Property keys for metadata and CDF staging area (RAW) must be valid identifiers and can only contain letters, numbers, or underscores. The OData service rewrites any other character to an underscore. For the best and most predictable results, make sure that ingested data follow this naming convention for property keys: ^[a-zA-Z][_a-za-z0-9]\*[a-zA-Z0-9]\$.

Troubleshooting

Find information to help you troubleshoot issues using CDF as a data source in Power BI.

Queries take too long

A CDF project can contain hundreds of millions of rows of data, and loading them all into Power BI isn't feasible. If your query takes hours to run, you are most likely trying to load too much data.

Use the information in the filtering article to limit the amount of data loaded into Power BI.

Not getting all results

If you get fewer results than expected, you may be using a filter function that CDF doesn't support, such as startswith on the Name column for TimeSeries.

For more information, see Supported filtering for CDF resources.

Unable to retrieve minimal values from CDF RAW

If you're using data from the CDF staging area, CDF RAW, in Power BI, you can experience issues retrieving small numbers in exponential notation.

CDF RAW doesn't have a schema, but the OData libraries in Power BI try to select the correct format for the data. Currently, Power BI chooses the wrong decoder for small numbers in exponential notation, and you may get an error similar to this:

DataSource,Error: OData: Cannot convert the literal '2.89999206870561 to the expected type 'Edm.Decimal',

To resolve this issue, ingest the values into CDF RAW as strings instead of numbers, and convert the strings back to numbers in Power BI, for example, using the Decimal.From Power Query M-function. You won't lose precision, and because most JSON decoders accept strings for numbers, clients that expect numbers will still work.